Bayes factor

In statistics, the use of Bayes factors is a Bayesian alternative to classical hypothesis testing.[1][2] Bayesian model comparison is a method of model selection based on Bayes factors.

Contents |

Definition

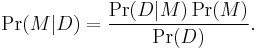

The posterior probability Pr(M|D) of a model M given data D is given by Bayes' theorem:

The key data-dependent term Pr(D|M) is a likelihood, and represents the probability that some data is produced under the assumption of this model, M; evaluating it correctly is the key to Bayesian model comparison. The evidence is usually the normalizing constant or partition function of another inference, namely the inference of the parameters of model M given the data D.

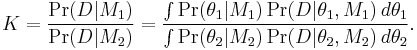

Given a model selection problem in which we have to choose between two models, on the basis of observed data D, the plausibility of the two different models M1 and M2, parametrised by model parameter vectors  and

and  is assessed by the Bayes factor K given by

is assessed by the Bayes factor K given by

where Pr(D|Mi) is called the marginal likelihood for model i.

If instead of the Bayes factor integral, the likelihood corresponding to the Maximum likelihood estimate of the parameter for each model is used, then the test becomes a classical likelihood-ratio test. Unlike a likelihood-ratio test, this Bayesian model comparison does not depend on any single set of parameters, as it integrates over all parameters in each model (with respect to the respective priors). However, an advantage of the use of Bayes factors is that it automatically, and quite naturally, includes a penalty for including too much model structure.[3] It thus guards against overfitting. For models where an explicit version of the likelihood is not available or too costly to evaluate numerically, approximate Bayesian computation can be used for model selection in a Bayesian framework.[4]

Other approaches are:

- to treat model comparison as a decision problem, computing the expected value or cost of each model choice;

- to use Minimum Message Length (MML).

Interpretation

A value of K > 1 means that the data indicate that M1 is more strongly supported by the data under consideration than M2. Note that classical hypothesis testing gives one hypothesis (or model) preferred status (the 'null hypothesis'), and only considers evidence against it. Harold Jeffreys gave a scale for interpretation of K:[5]

-

K dB bits Strength of evidence < 1:1 < 0 Negative (supports M2) 1:1 to 3:1 0 to 5 0 to 1.6 Barely worth mentioning 3:1 to 10:1 5 to 10 1.6 to 3.3 Substantial 10:1 to 30:1 10 to 15 3.3 to 5.0 Strong 30:1 to 100:1 15 to 20 5.0 to 6.6 Very strong > 100:1 > 20 > 6.6 Decisive

The second column gives the corresponding weights of evidence in decibans (tenths of a power of 10); bits are added in the third column for clarity. According to I. J. Good a change in a weight of evidence of 1 deciban or 1/3 of a bit (i.e. a change in an odds ratio from evens to about 5:4) is about as finely as humans can reasonably perceive their degree of belief in a hypothesis in everyday use.[6]

The use of Bayes factors or classical hypothesis testing takes place in the context of inference rather than decision-making under uncertainty. That is, we merely wish to find out which hypothesis is true, rather than actually making a decision on the basis of this information. Frequentist statistics draws a strong distinction between these two because classical hypothesis tests are not coherent in the Bayesian sense. Bayesian procedures, including Bayes factors, are coherent, so there is no need to draw such a distinction. Inference is then simply regarded as a special case of decision-making under uncertainty in which the resulting action is to report a value. For decision-making, Bayesian statisticians might use a Bayes factor combined with a prior distribution and a loss function associated with making the wrong choice. In an inference context the loss function would take the form of a scoring rule. Use of a logarithmic score function for example, leads to the expected utility taking the form of the Kullback–Leibler divergence.

Example

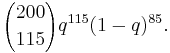

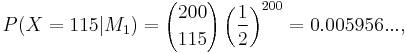

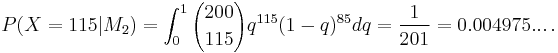

Suppose we have a random variable which produces either a success or a failure. We want to compare a model M1 where the probability of success is q = ½, and another model M2 where q is completely unknown and we take a prior distribution for q which is uniform on [0,1]. We take a sample of 200, and find 115 successes and 85 failures. The likelihood can be calculated according to the binomial distribution:

So we have

but

The ratio is then 1.197..., which is "barely worth mentioning" even if it points very slightly towards M1.

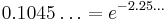

This is not the same as a classical likelihood ratio test, which would have found the maximum likelihood estimate for q, namely 115⁄200 = 0.575, and used that to get a ratio of 0.1045... (rather than averaging over all possible q), and so pointing towards M2. Alternatively, Edwards's "exchange rate" of two units of likelihood per degree of freedom suggests that  is preferable (just) to

is preferable (just) to  , as

, as  and

and  : the extra likelihood compensates for the unknown parameter in

: the extra likelihood compensates for the unknown parameter in  .

.

A frequentist hypothesis test of  (here considered as a null hypothesis) would have produced a more dramatic result, saying that M1 could be rejected at the 5% significance level, since the probability of getting 115 or more successes from a sample of 200 if q = ½ is 0.0200..., and as a two-tailed test of getting a figure as extreme as or more extreme than 115 is 0.0400... Note that 115 is more than two standard deviations away from 100.

(here considered as a null hypothesis) would have produced a more dramatic result, saying that M1 could be rejected at the 5% significance level, since the probability of getting 115 or more successes from a sample of 200 if q = ½ is 0.0200..., and as a two-tailed test of getting a figure as extreme as or more extreme than 115 is 0.0400... Note that 115 is more than two standard deviations away from 100.

M2 is a more complex model than M1 because it has a free parameter which allows it to model the data more closely. The ability of Bayes factors to take this into account is a reason why Bayesian inference has been put forward as a theoretical justification for and generalisation of Occam's razor, reducing Type I errors.[7]

See also

- Akaike information criterion

- Approximate Bayesian Computation

- Deviance information criterion

- Model selection

- Schwarz's Bayesian information criterion

- Wallace's Minimum Message Length (MML)

- Statistical ratios

References

- ^ Goodman S (1999). "Toward evidence-based medical statistics. 1: The P value fallacy" (PDF). Ann Intern Med 130 (12): 995–1004. PMID 10383371. http://www.annals.org/cgi/reprint/130/12/995.pdf.

- ^ Goodman S (1999). "Toward evidence-based medical statistics. 2: The Bayes factor" (PDF). Ann Intern Med 130 (12): 1005–13. PMID 10383350. http://www.annals.org/cgi/reprint/130/12/1005.pdf.

- ^ Robert E. Kass and Adrian E. Raftery (1995) "Bayes Factors", Journal of the American Statistical Association, Vol. 90, No. 430, p. 791.

- ^ Toni, T.; Stumpf, M.P.H. (2009). "Simulation-based model selection for dynamical systems in systems and population biology" (PDF). Bioinformatics 26 (1): 104–10. doi:10.1093/bioinformatics/btp619. PMC 2796821. PMID 19880371. http://bioinformatics.oxfordjournals.org/cgi/reprint/26/1/104.pdf.

- ^ H. Jeffreys, The Theory of Probability (3e), Oxford (1961); p. 432

- ^ Good, I.J. (1979). "Studies in the History of Probability and Statistics. XXXVII A. M. Turing's statistical work in World War II". Biometrika 66 (2): 393–396. doi:10.1093/biomet/66.2.393. MR82c:01049.

- ^ Sharpening Ockham's Razor On a Bayesian Strop

- Gelman, A., Carlin, J.,Stern, H. and Rubin, D. Bayesian Data Analysis. Chapman and Hall/CRC.(1995)

- Bernardo, J., and Smith, A.F.M., Bayesian Theory. John Wiley. (1994)

- Lee, P.M. Bayesian Statistics. Arnold.(1989).

- Denison, D.G.T., Holmes, C.C., Mallick, B.K., Smith, A.F.M., Bayesian Methods for Nonlinear Classification and Regression. John Wiley. (2002).

- Richard O. Duda, Peter E. Hart, David G. Stork (2000) Pattern classification (2nd edition), Section 9.6.5, p. 487-489, Wiley, ISBN 0-471-05669-3

- Chapter 24 in Probability Theory - The logic of science by E. T. Jaynes, 1994.

- David J.C. MacKay (2003) Information theory, inference and learning algorithms, CUP, ISBN 0-521-64298-1, (also available online)

- Winkler, Robert, Introduction to Bayesian Inference and Decision, 2nd Edition (2003), Probabilistic. ISBN 0-9647938-4-9.

External links

- Bayesian critique of classical hypothesis testing

- Web-based Bayes-factor calculator for t-tests, regression designs, and binomially distributed data

- The on-line textbook: Information Theory, Inference, and Learning Algorithms, by David J.C. MacKay, discusses Bayesian model comparison in Chapter 28, p343.